API Load Balancing Services: Essential Guide to Optimizing Performance and Reliability

In today’s digital landscape, where applications handle millions of requests daily, ensuring optimal performance and reliability has become paramount. API load balancing services represent a critical infrastructure component that distributes incoming network traffic across multiple servers, preventing any single server from becoming overwhelmed and ensuring seamless user experiences.

Understanding API Load Balancing Fundamentals

Load balancing operates on a simple yet powerful principle: distribute workload efficiently across multiple resources to maximize throughput, minimize response time, and avoid overloading any single component. When applied to APIs, this concept becomes even more crucial as modern applications increasingly rely on microservices architectures and distributed systems.

The fundamental mechanism involves a load balancer acting as a reverse proxy, receiving client requests and intelligently routing them to available backend servers based on predetermined algorithms. This approach not only enhances performance but also provides fault tolerance and scalability benefits that are essential for enterprise-grade applications.

Types of Load Balancing Algorithms

Different load balancing algorithms serve various use cases and requirements:

- Round Robin: Distributes requests sequentially across available servers, ensuring equal distribution under normal circumstances

- Weighted Round Robin: Assigns different weights to servers based on their capacity, directing more traffic to powerful machines

- Least Connections: Routes requests to the server with the fewest active connections, ideal for applications with varying request processing times

- IP Hash: Uses client IP addresses to determine server assignment, ensuring session persistence

- Geographic: Directs traffic based on user location, optimizing latency and compliance requirements

Hardware vs. Software Load Balancers

Organizations must choose between hardware and software-based solutions, each offering distinct advantages. Hardware load balancers provide superior performance and dedicated processing power but come with higher costs and limited flexibility. Software solutions offer greater customization, easier deployment, and cost-effectiveness, making them increasingly popular in cloud-native environments.

Modern trends favor software-defined approaches, particularly in containerized environments where traditional hardware solutions prove inadequate. Cloud providers like AWS, Google Cloud, and Microsoft Azure offer managed load balancing services that combine the benefits of both approaches while reducing operational overhead.

Implementation Strategies and Best Practices

Successful API load balancing implementation requires careful planning and consideration of several factors. Health checks represent a fundamental component, continuously monitoring server availability and automatically removing failed instances from the rotation. These checks should be lightweight yet comprehensive enough to detect various failure modes.

Session persistence, also known as sticky sessions, ensures that users remain connected to the same server throughout their interaction. While this approach can simplify application design, it may create uneven load distribution and reduce failover capabilities. Modern applications increasingly favor stateless designs that eliminate session persistence requirements.

Security Considerations and SSL Termination

Load balancers play a crucial role in application security by providing centralized SSL termination, DDoS protection, and request filtering capabilities. SSL termination at the load balancer level reduces computational overhead on backend servers while maintaining encryption for client communications.

Additionally, load balancers can implement rate limiting, IP whitelisting, and other security measures to protect against malicious traffic. Web Application Firewalls (WAF) integration provides an additional security layer, filtering out common attack patterns before they reach application servers.

Monitoring and Performance Optimization

Effective monitoring ensures optimal load balancer performance and helps identify potential issues before they impact users. Key metrics include response times, error rates, connection counts, and server health status. Modern monitoring solutions provide real-time dashboards and automated alerting capabilities.

Performance optimization involves fine-tuning algorithms based on actual traffic patterns and application behavior. A/B testing different configurations can reveal optimal settings for specific use cases. Regular performance reviews ensure that load balancing strategies evolve with changing application requirements.

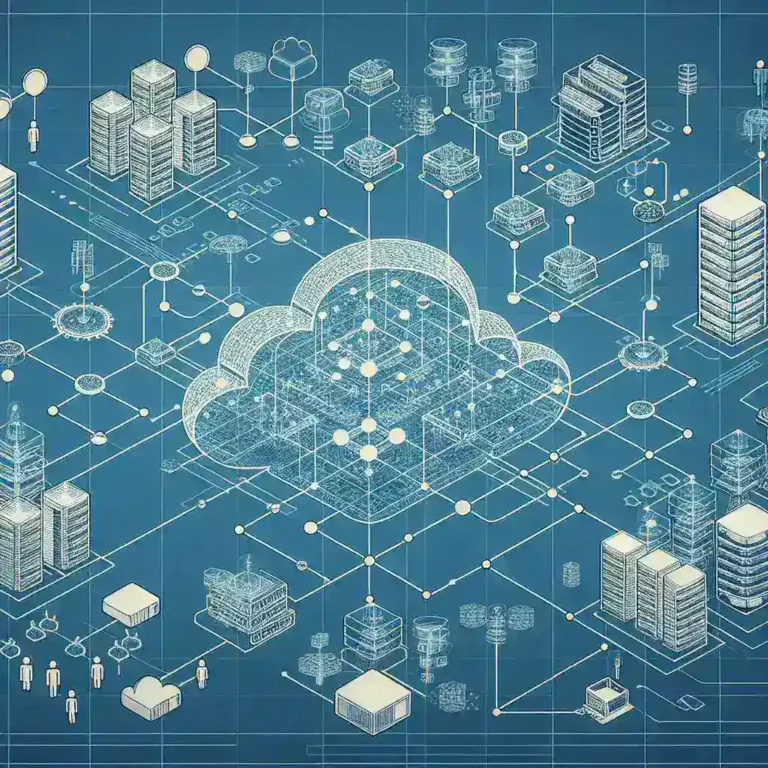

Cloud-Native Load Balancing Solutions

Cloud environments have revolutionized load balancing by offering managed services that automatically scale based on demand. These solutions integrate seamlessly with container orchestration platforms like Kubernetes, providing service discovery and automatic configuration updates.

Microservices architectures benefit significantly from service mesh technologies that provide advanced load balancing capabilities at the application layer. Solutions like Istio and Linkerd offer sophisticated traffic management features including circuit breakers, retries, and canary deployments.

Cost Considerations and ROI Analysis

While load balancing services require initial investment, they typically provide significant returns through improved uptime, enhanced user experience, and reduced infrastructure costs. The ability to handle traffic spikes without over-provisioning servers results in substantial cost savings over time.

Organizations should consider both direct costs (hardware, software licenses, cloud services) and indirect costs (operational overhead, maintenance, training) when evaluating load balancing solutions. Total Cost of Ownership (TCO) analysis helps make informed decisions about implementation approaches.

Future Trends and Emerging Technologies

The load balancing landscape continues evolving with emerging technologies like artificial intelligence and machine learning. Predictive load balancing algorithms can anticipate traffic patterns and proactively adjust routing decisions to optimize performance.

Edge computing introduces new challenges and opportunities for load balancing, requiring solutions that can operate effectively across distributed edge locations. 5G networks and IoT proliferation will further increase the importance of efficient load distribution mechanisms.

Common Pitfalls and Troubleshooting

Despite their benefits, load balancing implementations can encounter various challenges. Common issues include uneven load distribution, session persistence problems, and health check misconfigurations. Understanding these pitfalls helps organizations avoid costly mistakes and ensure successful deployments.

Troubleshooting load balancing issues requires systematic approaches, including traffic analysis, server performance monitoring, and configuration validation. Proper documentation and change management processes prevent configuration drift and simplify problem resolution.

Conclusion

API load balancing services represent an essential component of modern application architecture, providing the scalability, reliability, and performance required for today’s demanding digital environments. As applications continue growing in complexity and scale, sophisticated load balancing strategies become increasingly critical for success.

Organizations that invest in proper load balancing infrastructure position themselves for sustainable growth while ensuring optimal user experiences. The key lies in understanding specific requirements, choosing appropriate solutions, and implementing best practices that align with business objectives and technical constraints.

Leave a Comment